Hey folks, this article is a complete roadmap for you to use, in order to be job-ready as a DevOps engineer. This roadmap mainly focuses on the DevOps Engineer role, however, it also covers some topics needed for other roles such as a Cloud Consultant, SRE, Kubernetes Engineer, and Developer Advocate. So let's get started.

What is DevOps

Before we dive into the actual tools and technologies required for DevOps, first we must understand what is DevOps. Feel free to skip this section if you already know what is DevOps.

DevOps is the combination of cultural philosophies, practices, and tools that increases an organization’s ability to deliver applications and services at high velocity

What on earth does this mean? So in a traditional non-DevOps environment, we have a developer team and an operations team. The developers, as you can guess, handle the development of an application, creating and implementing new features. Whereas the Operations team handles the testing, infrastructure, scaling, stability of the software, and more.

DevOps unifies these two roles together hence we have Dev(Developer) and Ops(Operations) into one. In a nutshell, DevOps includes a lot of testing. These tests can be automated to save time and increase productivity. Moreover, a DevOps workflow enables the developers to push out more code in a shorter time without having to deal with manually finding bugs and errors.

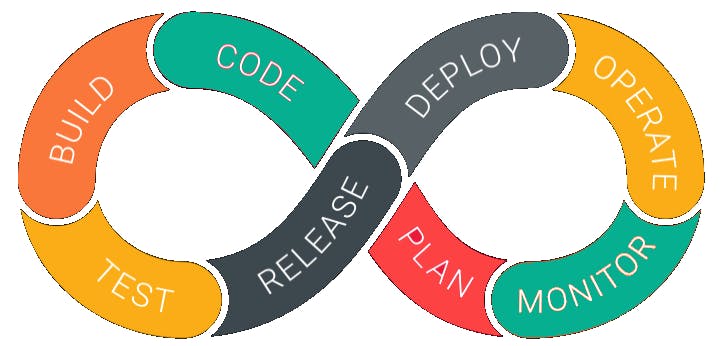

A typical DevOps lifecycle looks like this

Firstly, you have the planning phase, where you decide what features you want to create. Then you move on to coding, building, testing, release, deployment, operating, and monitoring it. One thing to mention is that in every single stage you test your software to make sure it works as intended.

Opportunites with DevOps

Now that you know what is DevOps, another question that comes to mind is what sort of opportunities do you have in DevOps?

Right now, DevOps engineers have a lot of demand and hence the market is always looking for DevOps engineers. From the previous section, you can guess why the demand is so high. Apart from just being a DevOps engineer, these practices are also useful for other development fields. Why is that so? I'll answer this question when we come to those sections.

Pre requisites

Now let's get started with the actual roadmap. As with any sort of technology, there are some pre requisites you should be aware of and know how it works.

Linux

Let's start with Linux OS, one of the skills that should be in every developer's toolbox. Now Linux has a lot of different distributions such as Ubuntu, CentOS, Kali, Arch and so many more. You should have a good understanding of the Linux command line and know your way around it. So here is a list of things you should know about Linux.

- Distros: Linux has a ton of different distributions, each intended for different uses.

- Command Line: You should know how to navigate around in Linux using the terminal with commands such as

cd,ls,mkdir,vi, etc. You should also know some networking commands such asping,netstat,nslookup, and more. - User Management: Learn about creating multiple users, and managing their permissions.

- Text editor (Vim): You should know how to use the terminal's text editor known as

vimorvi. This is useful if you want to change just one or two lines in a YAML file and don't want to leave the terminal to do it. - Processes: Knowing about processing in Linux is important if you need to terminate/kill certain processes for any reason.

- Package managers: Package managers are an easy way to download and install tools and software on any Linux distro. Some distros come with their own inbuilt package manager.

Now let's talk about why you need Linux. As a DevOps engineer, you will have to automate certain tasks. In order to do this, you will need to write small automation scripts. This can be done either with Bash scripting, python, or golang.

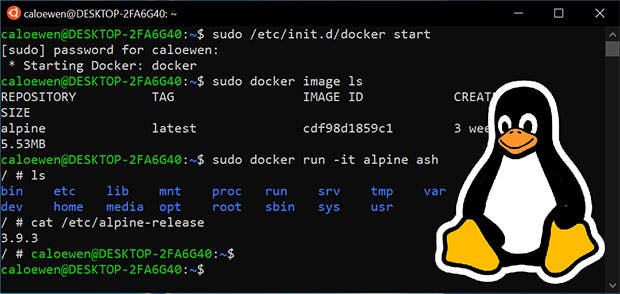

Another reason why you will need Linux is that most containers are configured to run only on a Linux environment. What is a container? We will look into that later in this article. Also a lot of tools that make a DevOps engineer's life easier run on Linux.

Resources

- Introduction to Linux by Linux Foundation

- Linux Crash Course by Free Code Camp

- Introduction to Linux by Kunal Kushwaha

- Linux Masterclass by Apoorv Goyal

Computer Networks

Now let's move on to the next part, Computer Networking. Why would you need to know about Networking? As a DevOps engineer, you are going to be working with Kubernetes a lot. And in Kubernetes, you often have to manually configure how the cluster is going to connect and access the internet, or any network. This also plays into data security to make sure your application cannot be accessed by any third parties without permission. So what topics do you need to know?

- How do systems communicate with each other?

- Types of network areas (WAN / LAN / WLAN)

- What is a switch, router, and ISP?

- Internet addresses and their types

- OSI model or TCP/IP model

- Network Protocols

- Basics of subnetting

- Basics of DNS

- Switching and Routing.

Now, these may seem like a lot of topics, which they are, but you do not need to go too in-depth into them. Having a basic understanding of them is sufficient.

Resources

- O'Reilly's Network and Kubernetes Book

- CCNA Course Part 1. Part 1 of this course is sufficient.

- Computer Networking course by Kunal Kushwaha

- NetworkChuck for more advanced concepts. Not required for starting out, but is good information to know later on.

YAML

YAML is a data serialization language similar to XML and JSON. We use YAML files for writing configuration files for Kubernetes. It can be interchanged with JSON, but we use YAML because it is a lot easier to write, it is cleaner, and overall just easier to read compared to something like XML. You can use the above image as a comparison. YAML is also quite easy to learn.

Resources

Git and GitHub

Git is a command-line tool that is used as a version control system. GitHub on the other hand is where the code you write is stored. Version control systems are needed regardless of what tech domain you work with. Using GitHub is a great choice since apart from just pushing your code to a repository, you can use GitHub actions as well, which allows you to create things like a testing pipeline, auto-updates for your dependencies, and more.

Git is a command-line tool that is used as a version control system. GitHub on the other hand is where the code you write is stored. Version control systems are needed regardless of what tech domain you work with. Using GitHub is a great choice since apart from just pushing your code to a repository, you can use GitHub actions as well, which allows you to create things like a testing pipeline, auto-updates for your dependencies, and more.

Resources

- A beginners course on Git and GitHub by Free Code Camp

- A Complete Git and GitHub tutorial by Kunal Kushwaha

- Git for Professionals by Free Code Camp

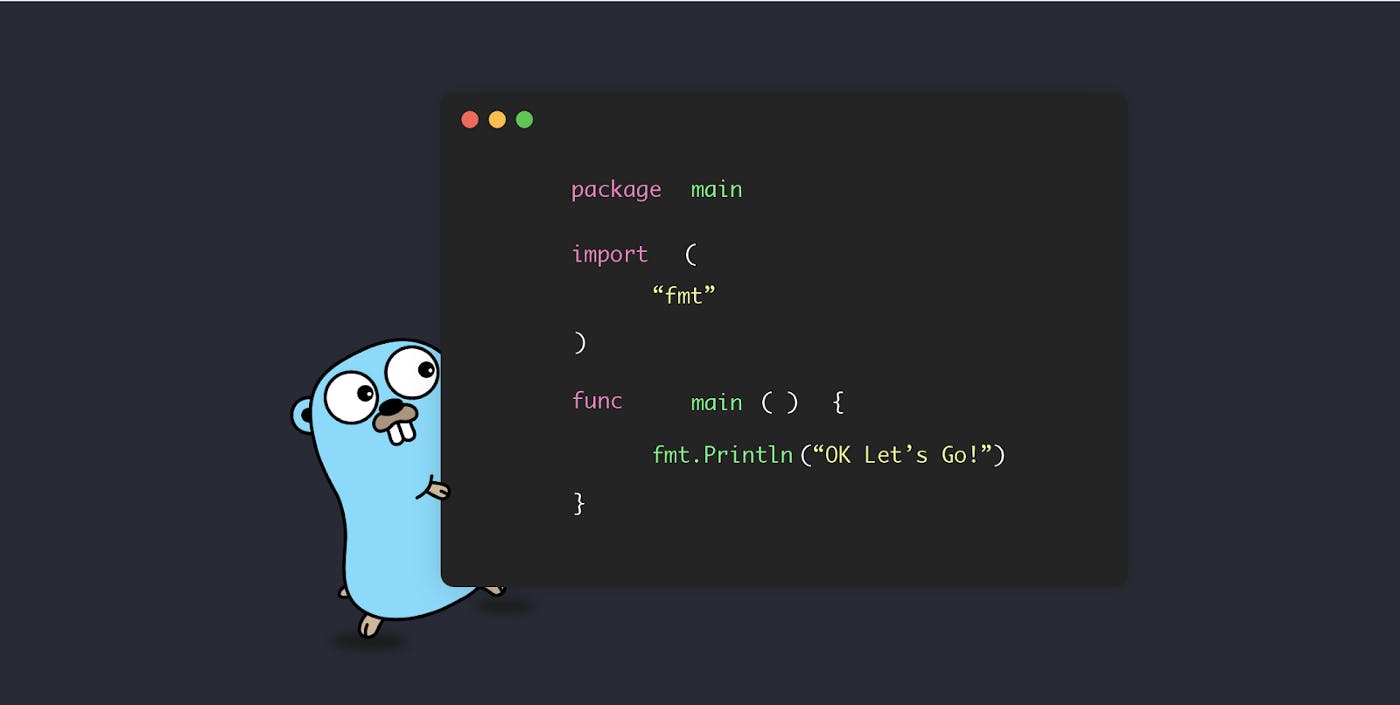

Golang

Now we are getting to the fun stuff. Let's talk a bit about Go/Golang. Now, for DevOps, having extensive programming knowledge isn't necessary, but you should know enough to be able to write short automation scripts. You can also use Python for writing scripts, but Go is becoming the industry standard for DevOps since it has a fast execution time, and can run multiple functions concurrently. For a full list of Go benefits, you can read this article.

Now we are getting to the fun stuff. Let's talk a bit about Go/Golang. Now, for DevOps, having extensive programming knowledge isn't necessary, but you should know enough to be able to write short automation scripts. You can also use Python for writing scripts, but Go is becoming the industry standard for DevOps since it has a fast execution time, and can run multiple functions concurrently. For a full list of Go benefits, you can read this article.

Resources

- Go Tutorial for beginners by TechWorld with Nana

- Learn Go Programming by Free Code Camp

- Learn Go by building 11 projects by Free Code Camp

Cloud

We discussed earlier that DevOps engineers work on the 'Operations part' as well. So it is important to know about cloud concepts that come along with how to deploy your application using cloud providers like AWS, Azure, GCP, Civo, etc. You should also know about storage, network, compute, and billing for these. In a nutshell, you should know the basic concept of how to deploy and manage your application using a cloud provider.

So this is a list of things you should know about the cloud. Of course, there are more things than these, but we will cover those in their specific sections of this article.

We discussed earlier that DevOps engineers work on the 'Operations part' as well. So it is important to know about cloud concepts that come along with how to deploy your application using cloud providers like AWS, Azure, GCP, Civo, etc. You should also know about storage, network, compute, and billing for these. In a nutshell, you should know the basic concept of how to deploy and manage your application using a cloud provider.

So this is a list of things you should know about the cloud. Of course, there are more things than these, but we will cover those in their specific sections of this article.

- Virtualization

- Infrastructure as a Service

- Platform as a Service

- Software as a Service

- Setting up networks

- Provisioning cloud resources

- Kubernetes

Resources

- Various cloud providers such as GCP and Azure have a tutorial for their services, but the core concepts and methods are the same.

- AWS Certified Cloud Practioner by Free Code Camp

- Introduction to Cloud Infrastructure Technologies by The Linux Foundation.

Important Note: The course from Linux Foundation is an advanced course but is great once you know your basics better. If you've never used cloud services before, I recommend using the AWS practitioner course.

Docker/Containers

Now let's take a look at containers. Containers are an isolated environment for your applications to run in. In a nutshell, your application is a product to be delivered to someplace, and a container is the packaging for it. Containers are a form of virtualization, but they are different from virtual machines.

Now, why are they important? Before we had container technologies, we would need to provision a virtual machine, with a complete installation of an operating system(mainly a Linux distro), and also configure your application in it. This used to consume a lot of resources and was expensive as well. And before virtual machines, we would have to buy an entire physical server and set it up. You can imagine how expensive this could have been.

Now comes into picture containers. These have only the minimum required files needed to run your application. Hence, by default, the container will only come with a terminal. These containers run in isolated environments, i.e they do not interact with any files on your main operating system. Let me simplify this with an example. Let's say you work on a MacBook, and you created a Ubuntu (Linux) container. Now while you use this containerized version of Ubuntu, you will not be able to access files on your MacBook.

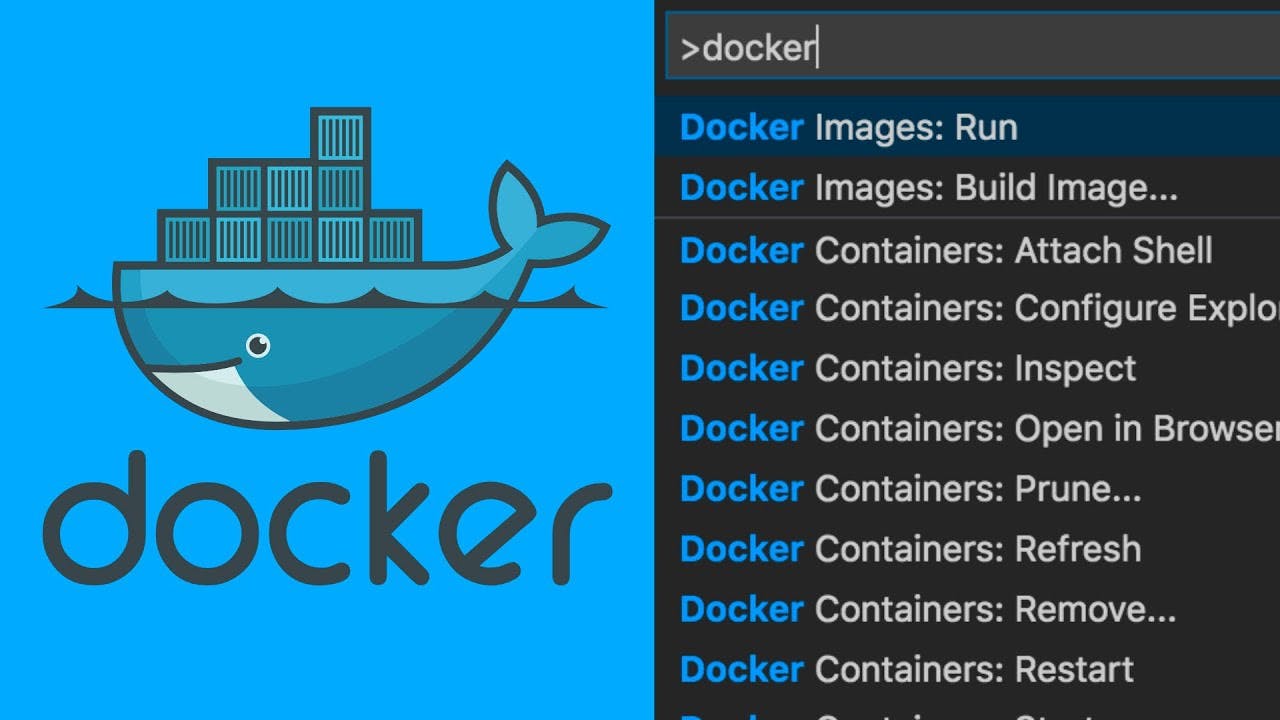

I hope you have a slight idea of why we use containers now. One of the most popular tools for using and creating containers is Docker. You will learn a lot more about containers and Docker from the resources linked below. Since it is such a vast topic, there are a lot of resources.

Resources

- Learn Docker by Free Code Camp

- Docker Tutorial by TechWorld With Nana

- Docker Tutorial by Kunal Kushwaha

- Docker Deep Dive with Nigel Poulton

- Docker File Best Practices

- Docker Security Essentials

- Auditing Docker Security

Kubernetes

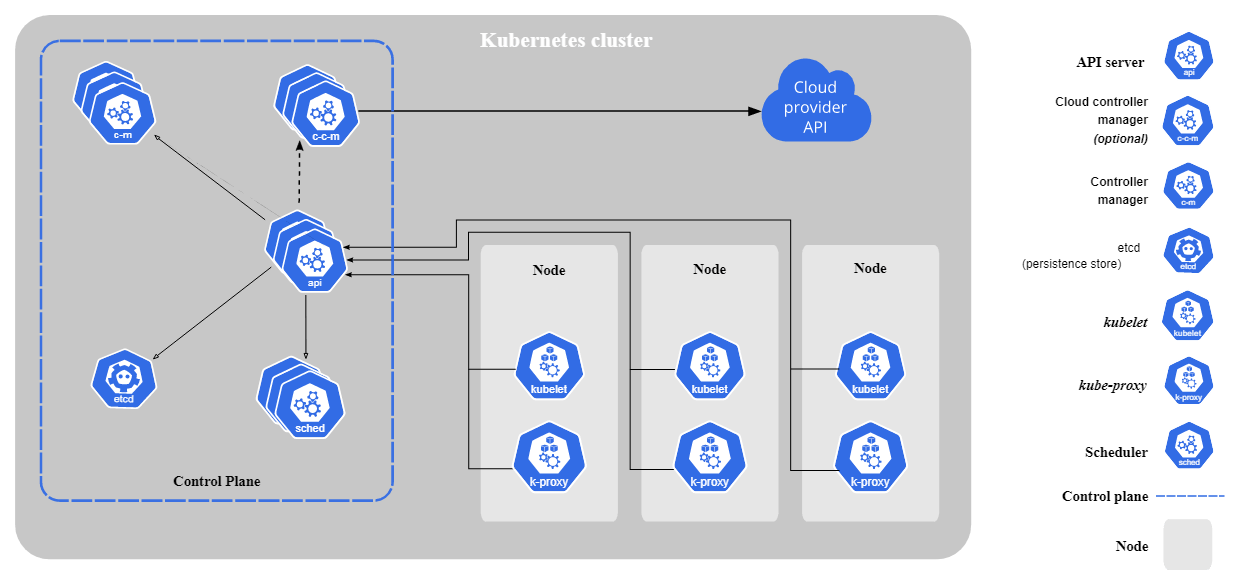

Kubernetes, also known as K8S, is a container orchestrator. If you didn't understand that, don't worry, I didn't either the first time I read that. So above, we talked about containers. We use k8s to tell our containers what to do, how to do it, and how much to do. Everything I mentioned above has just been building up towards Kubernetes, which is the main thing you will mostly use along with some other tools which extend its functionalities.

Kubernetes has a lot of components in it, but the most basic building blocks are clusters nodes and pods. Apart from those, there are a lot of other things in Kubernetes that work together to make it what it is. So here are some things you should know, along with some great resources.

Kubernetes has a lot of components in it, but the most basic building blocks are clusters nodes and pods. Apart from those, there are a lot of other things in Kubernetes that work together to make it what it is. So here are some things you should know, along with some great resources.

- What is Kubernetes?

- Architecture

- kubectl commands

- Objects

- Secrets

- Configmaps

- Persistent Volumes

- Networking

- Services

Resources

- Kubernetes for Beginners by Kunal Kushwaha

- Civo Academy

- Kube Academy

- Introduction to Kubernetes by The Linux Foundation

- Kubernetes Zero to Hero by TechWorld With Nana

- Official Kubernetes Documentation

- Kubernetes Operators by TechWorld With Nana

- Kubernetes Security by Saiyam Pathak and Dan Pop

- Kubernetes Security Best Practices

- Kubernetes Security with External Operators by Viktor Farcic

Continous Integration/Continous Delivery (CI/CD)

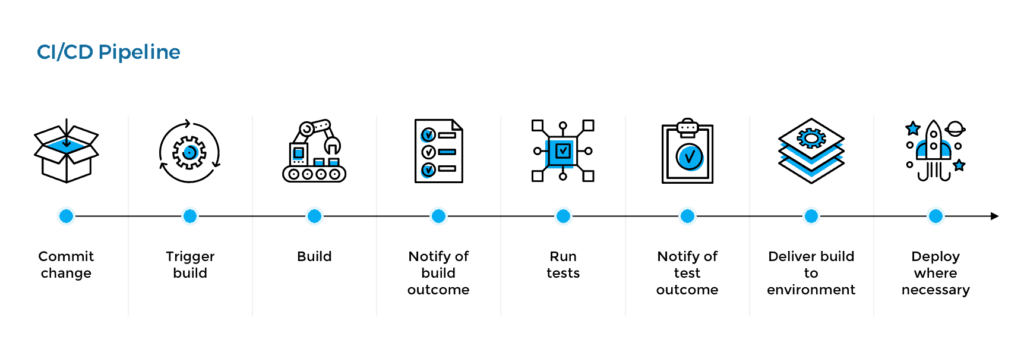

CI/CD is pretty self-explanatory about what it does. The process can be divided into 2 parts, the CI part, and the CD part. So the workflow would look something like this.

CI part

- Code is committed

- An image is built with the committed code

- The image is tested to ensure the application works as expected.

Once all the CI checks have passed, we can move on to the CD part. If you have any errors with either building the image or expected results, you will first have to resolve the errors before moving on to the CD part.

CD part

- After passing all checks, your image is staged.

- The image gets deployed and tested to ensure it runs properly.

- And finally, it is added to the release cycle.

So to achieve this, there are a ton of tools to handle the CI part and other tools for the CD part. For example, you have Circle CI and ArgoCD. And to automate this task, you can use Jenkins. This can also be done using GitHub Actions.

Resources

- Jenkins Complete Course.

- Introduction to GitHub Actions by Kunal Kushwaha

- CI/CD week by Saiyam Pathak

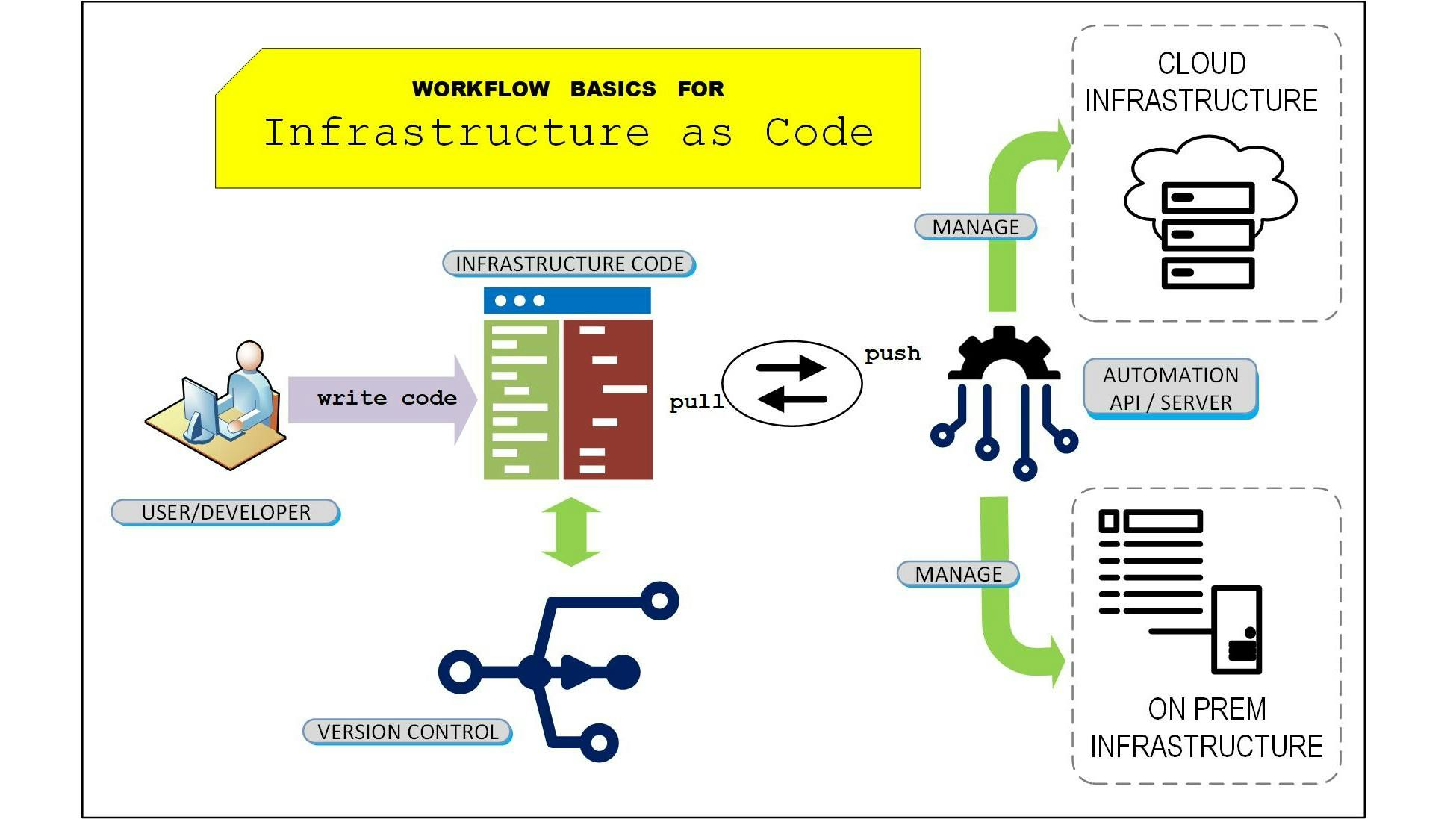

Infrastructure as Code (IaC)

Infrastructure as Code is used to codify your infrastructure. This means that you can write some code that will automate the process of provisioning Infrastructure. Now you may ask, why would you need to automate this process. The answer is scaling. As you scale your Kubernetes objects, the resource requirements are also going to increase. In that case, you don't want to be manually provisioning more resources. This is where IaC comes into the picture.

IaC allows you to automate the entire process of creating, deleting, and updating your infrastructure provisioning. This completely removes any manual errors and misconfiguration that might occur. You can also monitor the state of your infrastructure. If you are asking the question, "Won't this lead to too many costs?" and you are correct about that. For this, we also have tools that can help you to monitor and optimize costs. However, for now, let us just focus on IaC.

IaC allows you to automate the entire process of creating, deleting, and updating your infrastructure provisioning. This completely removes any manual errors and misconfiguration that might occur. You can also monitor the state of your infrastructure. If you are asking the question, "Won't this lead to too many costs?" and you are correct about that. For this, we also have tools that can help you to monitor and optimize costs. However, for now, let us just focus on IaC.

IaC can be created with tools such as Terraform, Pulumi, Crossplane and other tools as well. If you are new to the whole IaC concept, Terraform is where you should start.

Resources

- Terraform in 2 hours by Free Code Camp

- Terraform Associate Certification Course by Free Code Camp

- Crossplane Deep Dive by Saiyam Pathak

- Pulumi Tutorial by TechWorld With Nana

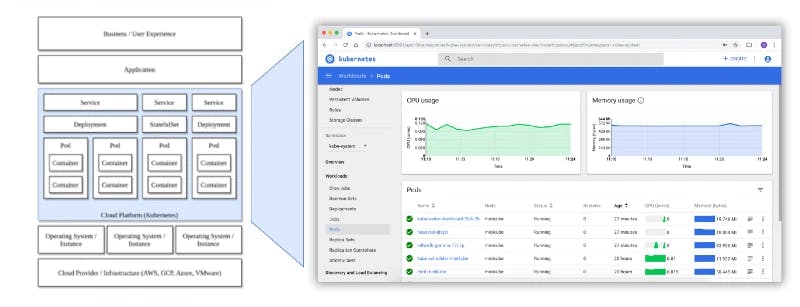

Observability

Observability is a way to monitor your Kubernetes Clusters, and how many resources they consume, create logs of all actions, record the latency and relationship between operations and clusters and check your application performance. For observability, we have 4 pillars which are Monitoring, Logging, Tracing, and Profiling, all of which can be done using different tools.

- Monitoring: Prometheus, Thanos, Grafana. Using all these 3 together gives an extensive look into your cluster.

- Logging: Loki or Elastic

- Tracing: Jaeger

- Profiling: Parca

Resources

- Introduction to Kubernetes Monitoring by Saiyam Pathak

- Getting started with Jaeger

- Getting dirty with Monitoring and Autoscaling Features for Self Managed Kubernetes cluster by Saiyam Pathak

- Introduction to Prometheus

- Thanos Deep Dive

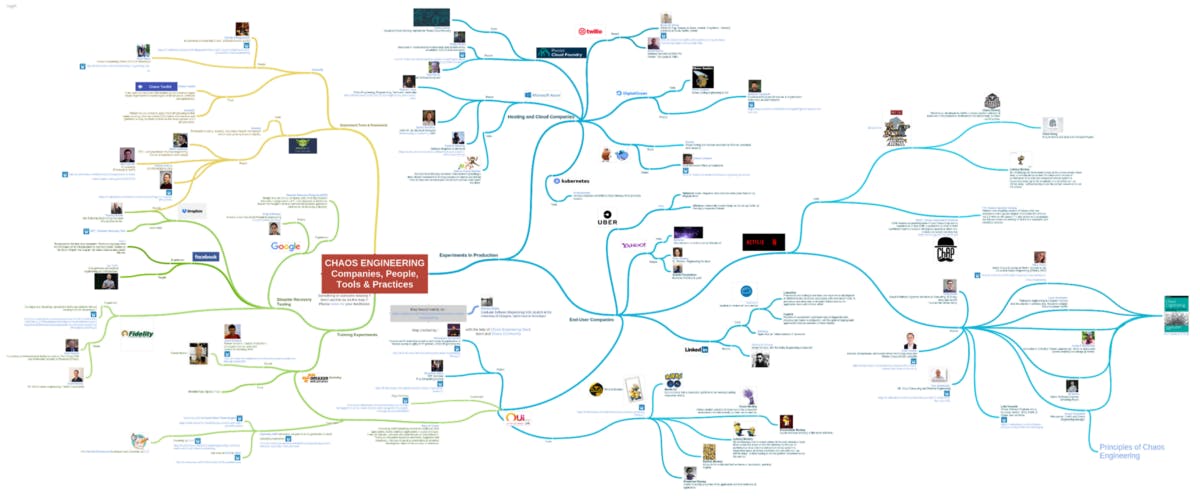

Chaos Engineering

Chaos Engineering adds a bit... actually a lot of Chaos into your application. Chaos is exactly what it sounds like. This is done to build robust and resilient systems which won't fail under heavy loads.

There are two tools that are mainly used for Chaos Engineering, Chaos Mesh and Litmus.

There are two tools that are mainly used for Chaos Engineering, Chaos Mesh and Litmus.

Resources

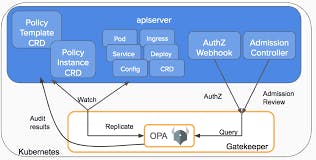

Policy Engines

Kubernetes Policies have become very important, as they introduce security that keeps your Kubernetes Clusters secure from external and internal threats. Policies are used to define a group of resources in a certain way to keep them secure.

This is where policy engines come into play. Since they already have security policies, we can use them to secure our Kubernetes resources.

This is where policy engines come into play. Since they already have security policies, we can use them to secure our Kubernetes resources.

Resources

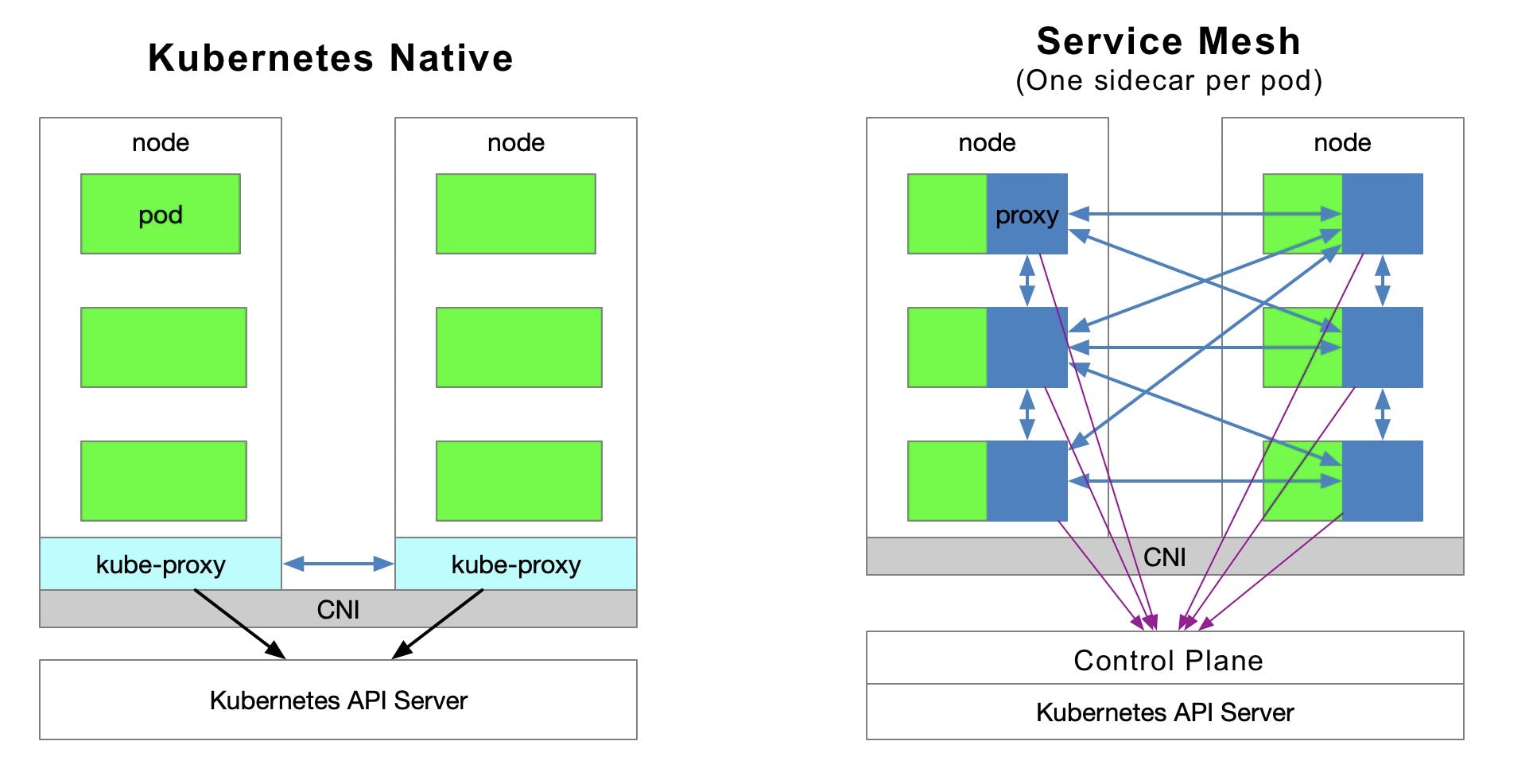

Service Mesh

Service Meshes are used to connect multiple microservices together. It is not required in all Kubernetes clusters, but once scaling takes place, a Service Mesh becomes a requirement.

LinkerD and Istio are the famous options when it comes to Service Meshes.

Resources

- Introduction to Service Mesh with LinkerD

- Istio and Service Mesh explained simply

- Setup Istio in Kubernetes

Quality of Life tools

Here is a list of a couple of tools that will make your learning journey much easier.

Final notes

If you made it this far, you're awesome! This roadmap is sufficient to be ready as a DevOps Engineer. It would take roughly around 6 months to complete all the topics mentioned here. If you do want to go the extra mile, here are some more things you can learn about

- Container Security

- Kubernetes Security

- CNCF landscape

- Kubernetes Operators

- Supply Chain Security

Credits

All credit for creating this roadmap goes to Saiyam Pathak. I simply converted it into a text format.